Introduction

The age of automation has evolved. We’re no longer just talking about simple rule-based bots, but increasingly about systems powered by artificial intelligence (AI) and machine learning (ML), systems that can learn, adapt and act with minimal human input. While the promise is enormous, higher efficiency, reduced cost, faster decision-making, the risks are real and growing.

In this blog, we’ll explore why risk-management matters in AI-powered automation, identify key pain points and danger zones, and show how a PeopleOps team can help their organisation navigate this terrain. We’ll use plain, accessible language, but also include industry keywords and tackle the topic in sufficient depth for both technical and business readers.

Why This Matters for PeopleOps

For PeopleOps, AI-powered automation is not just an IT or engineering issue, it directly touches on people, processes and performance.

- When automation replaces or augments tasks, it impacts roles and job design, raises questions of skills, reskilling and change management.

- When decisions are made (or assisted) by AI systems, “who’s responsible?” becomes a core concern, especially if something goes wrong.

- Employee trust, culture and fairness are deeply affected: for example, does your AI hiring-automation tool disadvantage certain groups (bias)?

- Compliance, data-privacy and ethical governance fall within PeopleOps’ remit (especially when employee-data is involved).

- The business wants results: faster, smarter automation but PeopleOps must ensure that we avoid unintended consequences, reputational damage, legal risk and disengagement.

In short: AI automation is a people risk as much as a technology risk. Managing it requires collaboration across HR/PeopleOps, technologists, legal/compliance, and business leadership.

What Are the Main Risks of AI-Powered Automation?

Here are the key risk dimensions you’ll want to understand and manage. Each opens a potential pain point for PeopleOps.

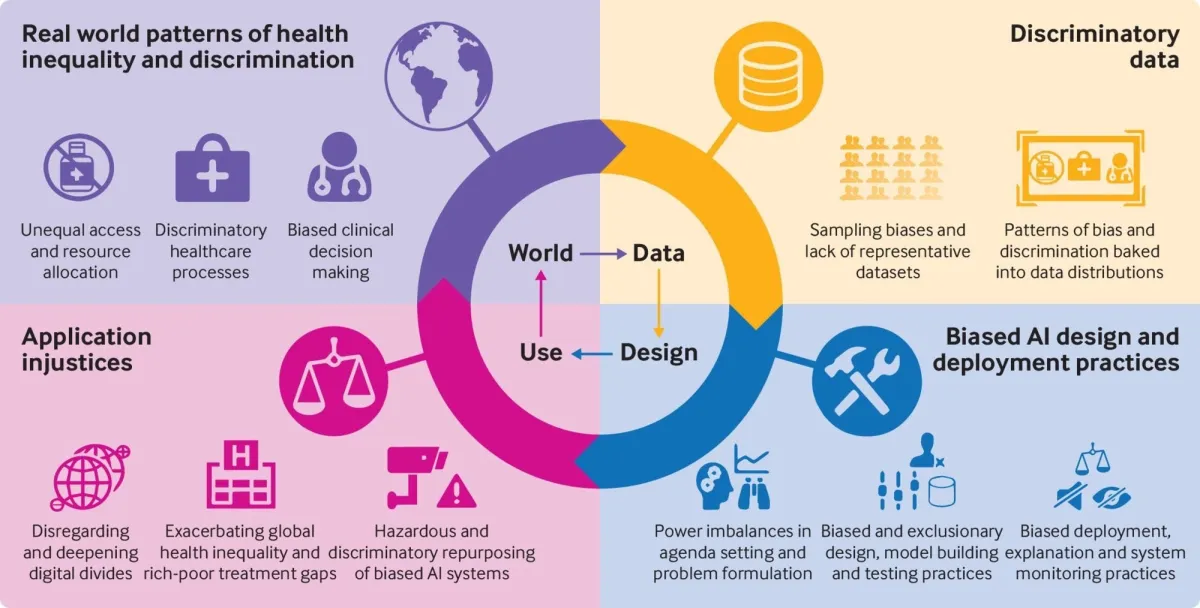

1. Bias, fairness & discrimination

AI systems learn from data. If that data contains historic bias, gender, ethnicity, age, geography, the automation can perpetuate or even amplify unfair decisions. For example: an applicant-tracking system rejecting more women than men; a tool for performance scoring which systematically undervalues certain groups.

IBM+1

Pain point for PeopleOps: If your organisation introduces AI in recruitment, performance review or talent-allocation, you risk undermining fairness, trust or even legal compliance.

2. Data quality, governance & privacy

AI relies on vast quantities of data. If the data is poor quality, incomplete, unrepresentative or wrongly labelled, outcomes will suffer. Also, the privacy of employee data is critical: Are you using personal-information, is consent properly handled, is data secured?

McKinsey & Company+1

Pain point: PeopleOps may need to ensure employee-data used in AI systems meets governance standards, is ethically handled, and that the system is transparent.

3. Explainability, transparency & trust

Many AI systems, especially deep-learning or complex ML models, behave like “black boxes”. For PeopleOps and business leaders alike, this raises three questions:

- Why did the system make this decision?

- Can humans intervene or override the system?

- Will employees trust that the system is fair and reliable?

A phenomenon called the “AI trust paradox” arises: the more human-like and convincing the AI result appears, the harder for users to spot when it’s wrong. Wikipedia

Pain point: If employees mistrust the automation or suspect bias or “unfairness”, adoption falters or backlash rises.

4. Operational & process risks

Even reliable AI systems can be built on fragile assumptions: changing external data, different geographies, languages, contexts. An AI-powered process may perform fine in one region but fail in another. An example: a bank found its OCR + automation mis-interpreting letters like “O” and “0” in account identifiers, leading to mis-mapped customer data. McKinsey & Company

Pain point: PeopleOps must coordinate with operations & tech to ensure process-monitoring, change-control, fallback to human review, etc.

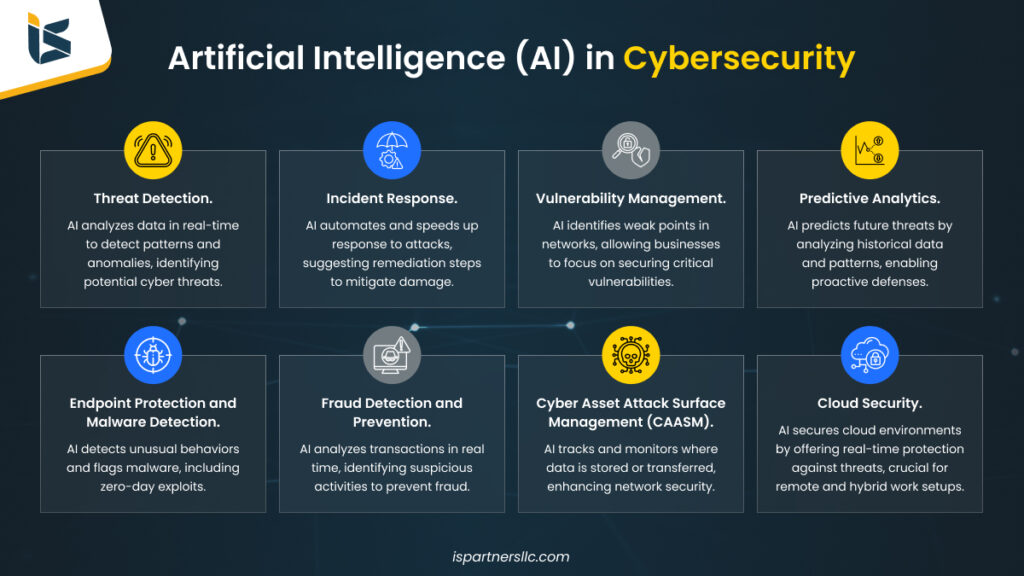

5. Cybersecurity, adversarial and supply-chain risks

AI systems open new attack surfaces. Model-poisoning, adversarial inputs, stolen training data, or misuse of generative AI for fraud are real threats. Thomson Reuters Legal+1

Pain point: From a PeopleOps perspective, this means ensuring that AI systems involving people (employee data, HR automation) are built with proper security controls and that the organisation is prepared for potential incidents.

6. Compliance, regulation & reputational risk

Regulators are increasingly focused on AI risk. For example, large-scale AI misuse can trigger legal action. AP News+1 If your organisation uses AI in ways that impact people (employees, customers), you must ensure compliance with emerging frameworks, and prepare for scrutiny of fairness, transparency, accountability.

Pain point: PeopleOps may need to work closely with legal/compliance teams to ensure that automation tools affecting people meet regulatory standards (e.g., employment law, data-protection rules).

7. Strategic & business risk

At the strategic level, poorly managed AI automation can lead to wrong decisions, loss of competitive advantage, failure to deliver ROI, or derailment of transformation programmes. For example, rolling out too many automations with high risk and little oversight reduces trust and wastes resources. Workday Blog+1

Pain point: PeopleOps is often the bridge between business strategy (what roles, what transformation) and actual delivery. If automation fails, it affects workforce planning, skills roadmap and ultimately the organisation’s ability to derive value from the tech investment.

Real-World Scenario: A PeopleOps Lens

Let’s take a scenario to illustrate how these risks play out, from a PeopleOps vantage point.

Scenario: A global services firm introduces an AI-powered tool to support career-path recommendations and internal mobility. The system takes existing employee data (skills, performance, aspirations), organisational role data, and external labour-market data, and suggests to each employee 2-3 recommended role moves, skills-up programmes, or lateral shifts.

What could go wrong?

- If the training data reflects historic promotion bias (e.g., few women moved into leadership), the system may recommend fewer leadership-moves for women. That’s a fairness risk.

- If employee data is incomplete (e.g., missing informal skills, unstructured data), recommendations could be irrelevant or inaccurate. That’s a data-quality risk.

- If the system changes over time (new roles, external market shifts) but the model is not updated or monitored, the recommendations degrade. That’s an operational risk.

- Employees may mistrust the system if they can’t understand why a “recommended” role appears or worse, they feel they’re being pigeon-holed. That’s a cultural/trust risk.

- If the system has access to sensitive personal data and is hacked or misused, there’s a privacy / cybersecurity risk.

- If regulators scrutinise internal talent-systems (especially in regulated sectors), there could be compliance risk.

- If the pilot fails, employees resist future automation, ROI is low, transformation stalls: a strategic risk.

How PeopleOps can proactively manage this:

- Governance and oversight: Set up a cross-functional review board (PeopleOps + tech + legal + business) before deployment. Define ownership, roles, escalation paths.

- Bias audit & fairness checks: Ensure model inputs are reviewed for historical bias. Use fairness metrics. Have human-in-the-loop for sensitive decisions.

- Data governance: Define what employee data is used, how it’s collected, who has access, security controls. Ensure transparency with employees.

- Explainability & communication: Provide employees with “why this recommendation” narratives. Train users on how the tool works, what it can and cannot do.

- Monitoring & human-backup: Monitor performance of the model over time. Flag when output diverges or outcomes deviate. Provide human review fallback.

- Skill & change management: Equip HR/PeopleOps team and employees for change: face-to-face discussions, feedback loops, adapt roles and career pathways accordingly.

- Compliance & privacy: Work with legal to align with data-protection laws (GDPR, local jurisdictions), labour law fairness requirements.

- Feedback & continuous improvement: Collect employee feedback on tool usefulness, trust, fairness. Tweak and re-train as necessary.

By embedding PeopleOps into the entire lifecycle from design, roll-out, monitoring to continuous improvement, you help ensure that AI automation does not become a risk to your workforce or culture and instead becomes a force-multiplier.

Key Steps: A Risk-Management Framework for AI Automation

Here’s a practical framework (adapted from current best-practice) that PeopleOps can use when overseeing AI-powered automation initiatives.

- Map – Understand the automation landscape:

- What processes are being automated or augmented by AI?

- What decisions will be made (or assisted) by the system?

- Which stakeholders (employees, customers, partners) are impacted?

- What are the objectives and what could go wrong?

Wolters Kluwer+1

- Measure / Assess – Conduct risk assessment:

- Evaluate data-quality, bias, explainability, robustness, security. McKinsey & Company+1

- Prioritise key risks: which automations carry higher impact (people, reputation, regulatory)?

- Establish metrics: e.g., fairness-metrics, error-rates, human override rates, employee-satisfaction scores.

- Manage / Mitigate – Design controls and safeguards:

- Human-in-loop / human override.

- Monitoring & logging of AI-decisions.

- Model version control, retraining cycle, data refresh.

- Employee transparency & training programmes.

- Security controls, access controls, data encryption.

IBM

- Govern – Build governance, accountability & culture:

- Senior leadership sponsorship and accountability.

- Clear ownership of AI systems (who is responsible when something goes wrong?).

- Ethics and fairness review board.

- Integration with your broader ERM (enterprise risk management) and PeopleOps frameworks. Riskonnect

- Monitor & Improve – Ongoing review:

- Track KPIs and incident logs.

- Get qualitative feedback from employees and teams.

- Reassess risk as business, external environment, technology evolve.

- Adapt and evolve the automation.

Wolters Kluwer

By following these steps and embedding PeopleOps’ voice, you tie the technical automation agenda to the people-side, ensuring adoption, fairness and risk control.

How PeopleOps Can Add Unique Value

As a PeopleOps professional or team, you can bring specific capabilities to the table:

- Employee advocacy & trust building: You can serve as the voice of the workforce, ensuring that automation decisions are communicated, transparent and inclusive.

- Change-management & upskilling strategy: Automation will shift tasks and roles not eliminate people. You can help design reskilling, career-pathway programs, job-redesign initiatives.

- Ethics & fairness lens: You can build and maintain policies that keep AI tools aligned with organisational values: fairness, diversity & inclusion, human dignity.

- Data-governance & privacy awareness: Even if the tech team builds the models, you ensure that employee-data usage aligns with consent, privacy, transparency.

- Feedback loop & culture monitoring: You can track how employees perceive and engage with automation tools, highlight unintended outcomes, surface risks early.

- Cross-functional coordination: You’re a connector between tech, business units, legal/compliance. By being in the room early, you help avoid last-minute surprises.

Conclusion

AI-powered automation offers enormous potential: cost savings, speed, insights. But without robust risk-management—especially around people, fairness, data, governance, you risk undermining trust, culture and value.

For PeopleOps teams, this means stepping up: not letting automation become a “tech only” issue. Instead you position yourselves as strategic partners, ensuring that automation is human-centred, fair, transparent, trusted and aligned with business-and-people goals.

By using the framework above (map, measure, manage, govern, monitor), collaborating across functions, and keeping the voice of your people front and centre, you’ll help your organisation harness the power of AI automation and keep its workforce engaged, safe and valued.

Leave a Reply