In today’s fast-evolving business landscape, the alliance between human talent and machine intelligence is no longer a futuristic vision, it’s in our boardrooms, operations, and workflows. For PeopleOps leaders, understanding how to build trust in human-AI collaboration is crucial: trust affects acceptance of AI tools, quality of decisions, employee morale, and ultimately business outcomes.

In this blog we’ll explore what trust means in the context of human-AI teaming, why it matters, the pain points organisations face, real-world scenarios, and how PeopleOps functions can lead the way in fostering healthy, high-trust collaboration between humans and AI systems.

What do we mean by “trust” in Human-AI collaboration?

In human relationships, trust typically means believing someone will act competently, with good intentions, and will not take advantage of vulnerability. In human-AI contexts, researchers argue those same dimensions apply but the dynamics change because the “team-member” is a machine or algorithm. SpringerLink+2PMC+2

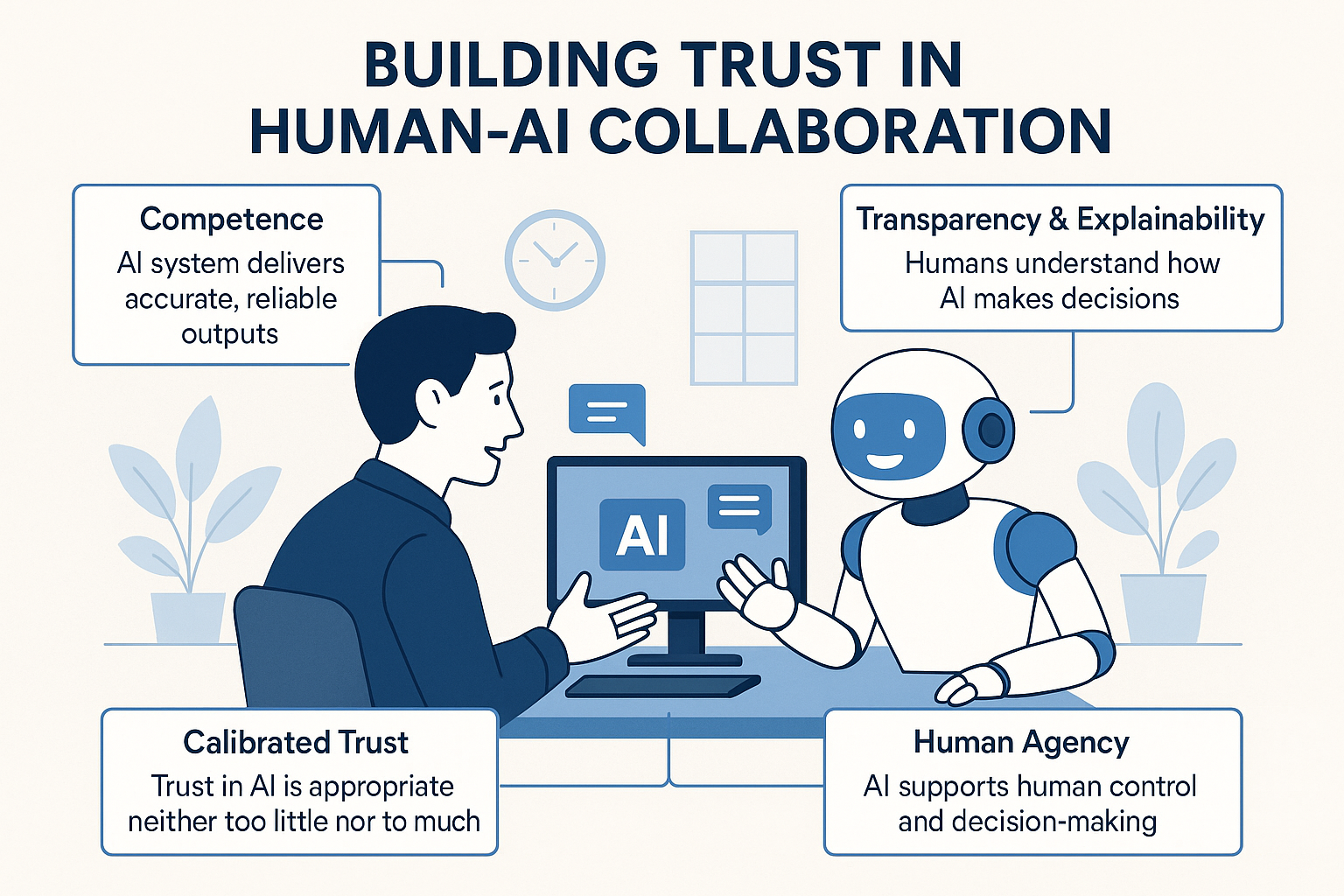

Key facets of trust in human-AI collaboration:

- Competence: Does the AI system deliver accurate, reliable outputs over time?

- Transparency & explainability: Can humans understand or inspect how the AI makes decisions?

- Agency & motive: While AI doesn’t have motives like a human, the design, purpose and oversight of the system signal whether its “intentions” align with human users and organisational goals. SpringerLink+1

- Calibrated trust: Neither too little (leading to ignoring valuable AI assistance) nor too much (leading to over-reliance and neglect of human judgement). arXiv+1

- Context & environment: Trust evolves depending on the task, setting, risk level and human-machine team dynamics. ScienceDirect+1

In short: we want humans and AI working with each other not humans just blindly accepting AI outputs, nor humans resisting helpful AI because of distrust.

Why building trust matters for PeopleOps and organisations

From a PeopleOps perspective, where you are responsible for aligning people, processes and technology, the trust factor in human-AI collaboration carries several important implications:

- Adoption & ROI of AI initiatives: Without trust, employees don’t use the tools fully or correctly; an AI system might under-deliver just because people don’t engage with it. For example, one global study found 66% of workers rely on AI output without evaluating accuracy, and 56% report errors in their work due to AI. KPMG

- Human-AI team performance: Research shows that simply combining humans and AI doesn’t automatically outperform the best human or best AI alone, if trust is mis-aligned, mixing them can even reduce performance. MIT Sloan+1

- Employee experience and morale: If staff feel AI is untrustworthy, opaque or undermines their role, you risk resistance, lower engagement, or fear of replacement.

- Ethical, legal and reputational risk: Wrong decisions, biases, lack of accountability, all have trust implications. PeopleOps can play a role in governance, training and policy.

- Culture of innovation and change: Trusting collaboration with AI can create new capabilities, agility, and growth; but if trust is missing, AI becomes a cost centre or hazard.

Thus, PeopleOps must be proactively involved: not just handing over “AI tools”, but helping integrate them into the human side of the equation, skills, mindset, culture, processes, metrics.

Common pain points & trust-breakers in human-AI collaboration

Here are some of the recurring challenges organisations face when trying to build trust between people and AI:

- Opaque “black-box” AI models

If employees can’t understand how the AI arrived at a recommendation, they may distrust or override it. Explainability matters. The Science and Information Organization+1 - Over-reliance or complacency

Trust that is too high (mis-calibrated) can lead humans to accept incorrect AI outputs without questioning. Researchers found uncalibrated AI confidence leads to misuse. arXiv+1 - Under-trust and resistance

At the other extreme, people may ignore AI input altogether, undermining potential gains. For example: in a study of human-AI teaming, the human+AI combo performed worse than AI alone because the human didn’t know when to trust the AI. MIT Sloan - Mismatched role definitions

If people don’t understand how the AI fits their role (advisor? co-worker? assistant?), confusion sets in. Also, the system may not support empowerment and agency. MDPI+1 - Lack of organisational support

No training, unclear processes, missing governance, and no feedback loops all reduce trust. A systemic approach is needed. - Ethical/ bias concerns

If AI decisions are biased, inconsistent or unfair, trust erodes quickly. Stakeholders may question the motives or legitimacy of algorithms. PMC

Real-world scenario: PeopleOps in action

Let’s imagine a mid-sized tech company, “TechNova”, implementing an AI-assisted recruitment tool. The algorithm helps screen resumes and rank candidates based on competencies, cultural fit, and past outcomes.

Situation: After rollout, hiring managers seldom use the tool fully, they override many suggestions, frequently fall back to older manual methods, and complain that the AI “doesn’t get it”.

Pain points identified:

- The AI’s “why” (why a candidate is ranked high) is not visible → hiring managers feel they cannot trust it.

- They see no training on how the AI works or how to combine their judgment with the tool’s recommendation.

- When the AI makes an odd suggestion (e.g., candidate outlier), human managers override but the tool doesn’t explain how to incorporate that override feedback.

- Some teams feel the AI is imposed top-down and they fear losing control of hiring decisions (agency concern).

PeopleOps interventions:

- Organise training sessions: “Understanding the AI model”, “When to trust the AI, when to question it”.

- Introduce transparent dashboards: candidate ranking + explanation bullets + past outcomes.

- Embed human-in-loop design: The AI recommendation is advisory; hiring manager has final decision but must record a short reason when overriding the AI, so the system and humans learn together.

- Set up feedback loops: monthly check-ins with hiring teams, measure how overrides map to outcomes, and refine the algorithm together.

- Cultivate a culture of “AI + Person” rather than “AI vs Person”: emphasise the human strengths (empathy, context, judgement) and machine strengths (processing large resumes, patterns) are complementary.

Outcome: Over 3 months the adoption rate rose, hiring managers reported more confidence in the tool, and time-to-fill decreased while candidate quality rose. Trust between the workforce and the AI tool improved, creating better alignment.

A PeopleOps roadmap: Steps to build trust in human-AI collaboration

Here’s a practical framework that PeopleOps teams can follow to build and sustain trust in human-AI collaboration:

1. Define the human-AI teaming model

- Clarify roles: Is the AI advisory, co-creator, decision-maker, monitor or assistant?

- Determine tasks: Which parts will humans do, which parts AI will (and why). The article from MIT Sloan noted that human+AI combinations don’t always outperform humans or AI alone unless the right tasks are assigned. MIT Sloan

- Identify risk levels, stakes and oversight required.

2. Foster transparency and explainability

- Provide explanations, confidence levels, uncertainty measures in AI outputs. The human user should know when to trust and when to question. arXiv

- Visualise how the AI arrived at a suggestion (features, data, logic) wherever feasible.

- Communicate limitations: what the AI can and cannot do (and in what contexts).

- Encourage a feedback mechanism: human users can challenge or correct the system.

3. Calibrate trust, encourage appropriate levels

- Train users not just on the tool but on how to combine human judgment + AI input.

- Develop metrics and dashboards: track when the AI was accurate, when errors occurred, human override rates, causes.

- Encourage reflection: when human overrides occur, was that justified? When humans accepted the AI, did it turn out well? Learning loops help.

- PeopleOps can facilitate “trust calibration sessions” (post-mortems of decisions involving AI) to surface lessons and build confidence.

4. Support human competencies & mindset

- Upskill employees: beyond technical training, focus on data literacy, understanding algorithmic output, being comfortable working alongside AI.

- Promote mindset: the AI is a partner, not a replacement; the human’s role shifts but remains critical.

- Encourage psychological safety: users should feel safe to speak up when AI suggestions feel wrong, organisational culture must support this. Research shows psychological safety buffers negative effects of dispersed trust within human-AI teams. CEUR-WS

5. Establish governance, oversight & ethics

- PeopleOps should partner with technology, legal and ethics teams to set policy for AI use: bias checks, fairness, accountability.

- Clarify responsibility: who owns the decision, how disputes get resolved, how human judgement overrides work.

- Monitor outcomes, not just usage: track whether AI integration is delivering value, and where trust breakdowns occur.

- Align leadership messaging: Leadership plays a key role in setting the tone for human-AI collaboration. Transformational, ethical, adaptive leadership strengthens trust. MDPI

6. Continuously iterate and improve

- AI systems and human workflows evolve: PeopleOps should ensure constant feedback, adjustment, training refreshers.

- Set up cross-functional teams (PeopleOps, AI engineers, end users) to review collaboration outcomes quarterly.

- Celebrate successes of human-AI teams and surface learnings from failures, trust grows when teams see clear benefits and transparent treatment of mistakes.

Specific PeopleOps considerations for HR/People teams

Since you’re writing for a PeopleOps audience, here are some HR-specific considerations when integrating AI tools (e.g., recruiting, performance management, learning & development, workforce planning):

- Onboarding and training: Introduce the AI tool during onboarding, clarify how it impacts roles and how humans and AI will collaborate.

- Role redesign and change management: For example, when AI takes over repetitive data tasks, the human role may shift to more strategic, creative or interpersonal work. Communicate the change clearly to avoid fear and build trust.

- Performance metrics: If using AI in performance dashboards, ensure transparency of metrics, fairness, and avoid “black-box” assessments that erode trust.

- Diversity & inclusion: AI systems can perpetuate bias; PeopleOps must audit, monitor and ensure the AI collaborator is aligned with fairness goals. When bias is spotted, users must be empowered to question the system.

- Employee voice and participation: Include representative users early in the design and rollout of AI collaborations. This builds buy-in and trust.

- Feedback culture: Implement channels where employees can raise concerns or suggest improvements about AI tools, ensuring they don’t feel sidelined or replaced.

- Leadership communication: Leaders should emphasise the human-plus-AI narrative, not the human-versus-AI fear. They must model trust behaviours: using the tool properly, acknowledging when AI output was wrong and how it was corrected.

Key takeaways

- Trust in human-AI collaboration is not automatic, it needs design, culture, process, and human-centric focus.

- PeopleOps plays a central role: aligning people and culture with technology, not just deploying AI and hoping for the best.

- Appropriate trust (neither blind acceptance nor complete rejection) is the goal, this requires transparency, calibration, human empowerment and oversight.

- By architecting human-AI teams thoughtfully, organisations can tap the combined strength of human intuition, context, empathy and AI’s speed, scale and pattern-recognition.

- Ultimately: human-AI collaboration is not about replacing humans or automating everything, it’s about augmenting human capabilities, unlocking new value, and creating workplaces where people and machines contribute meaningfully together.

Call-to-action for PeopleOps leaders

- Audit your current AI tools: How much are employees using them? What do employees think of them? Are there usage barriers, trust issues, or role misalignments?

- Design a trust-building programme: Workshops for users, transparent dashboards, human-in-loop processes, feedback loops.

- Set up governance and measurement: Define success metrics (both human experience and business outcomes), monitor trust and collaboration health over time.

- Lead with culture: Communicate the role shift clearly, emphasise that people remain central, promote stories of human + AI success.

By putting trust at the heart of your human-AI collaboration strategy, you position your organisation and your people, for the future of work.

Leave a Reply